- Unity マニュアル

- ベスト プラクティス ガイド

- Unity で現実のようなビジュアルを作成する

- Unityで使うアセットの準備

Unityで使うアセットの準備

まず最初に行うのは、アセットを目的に適した形式にすることです。3ds Max、Maya、Blender、Houdini などの 3D モデリングアプリケーションから Unity へアセットをインポートする適切なワークフローを設定することはとても重要です。 Unity にインポートする目的で、3D モデリングアプリケーションからアセットをエクスポート するときは、以下を考慮する必要があります。

スケールとユニット

プロジェクトのスケールと選択する測量ユニットは、現実のようなシーンを作るのに非常に重要な役割を果たします。実際には多様な設定がありますが、1 Unity ユニット = 1メートル (100cm) とすることを推奨します。なぜなら、多くの物理システムはこのユニットサイズを想定しているからです。詳細は、 アートアセットベストプラクティスガイド をご覧ください。

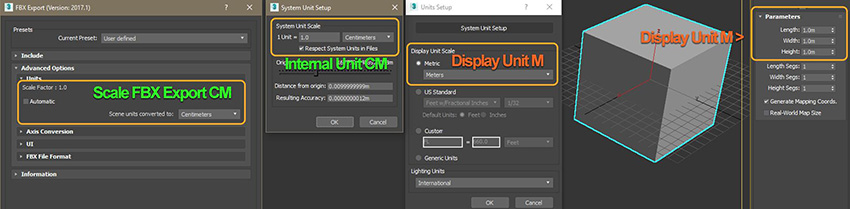

3D モデリングアプリケーションと Unity の整合性を維持するために、インポートしたゲームオブジェクトのスケールとサイズは常に検証してください。3D モデリングアプリケーションには、FBX エクスポート設定にユニットとスケールの設定があります (設定については各 3D モデリングソフトウェアのドキュメント参照)。 一般に、Unity にインポートする際にスケールを一致させる最も良い方法は、これらをセンチメートルで使用するよう設定し、FBX を自動スケールでエクスポートすることです。 ただし、新しいプロジェクトを開始するときは、ユニットとスケールの設定が一致していることを常に確認する必要があります。

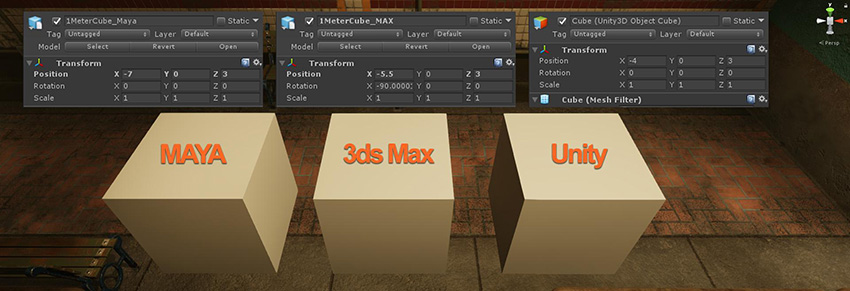

手早くエクスポート設定を検証するには、3D モデリングアプリケーションで、単純な 1x1x1 メートルのキューブを作り、それを Unity にインポートします。Unity でデフォルトのキューブを作ります (GameObject > 3Dオブジェクト > Cube)。このデフォルトキューブは 1x1x1 メートルです。このキューブを基準にし、インポートしたモデルと比較します。これら 2 つのキューブは、Transform コンポーネントの Scale プロパティーを 1,1,1 に設定すると全く同じに見えるはずです。

ノート

- Maya と 3dsMax は、最後に開いたファイルに基づいてデフォルトのユニットをオーバーライドします。

- 3D モデリングアプリケーションは内部ユニット設定にワークスペースの様々なユニットを表示できます。これが混乱の原因となる場合もあります。

参照スケールモデルの基準点

プレースホルダーやスケッチジオメトリを使用してシーンをブロックするときは、参照スケールモデルの基準となる点があると便利です。作成しているシーンに適した参照スケールモデルの基準点を選択します。トンネルのサンプルシーンの場合では、ベンチを使用します。

シーンでは、現実世界と厳密に同じ比率を使う必要はありません。たとえ、シーンに意図的に誇張された比率のものがあったとしても、参照スケールモデルの基準点を使って、簡単にゲームオブジェクト間でスケールの一貫性を保つことができます。

テクスチャの出力とチャンネル

マテリアルに追加したときに適切な結果を得るためには、テクスチャ内に正しい情報が含まれていなければなりません。Photoshop やSubstance Painter などのテクスチャオーサリングソフトウェアは、正しく設定すると一貫して予測通りのテクスチャを出力します。

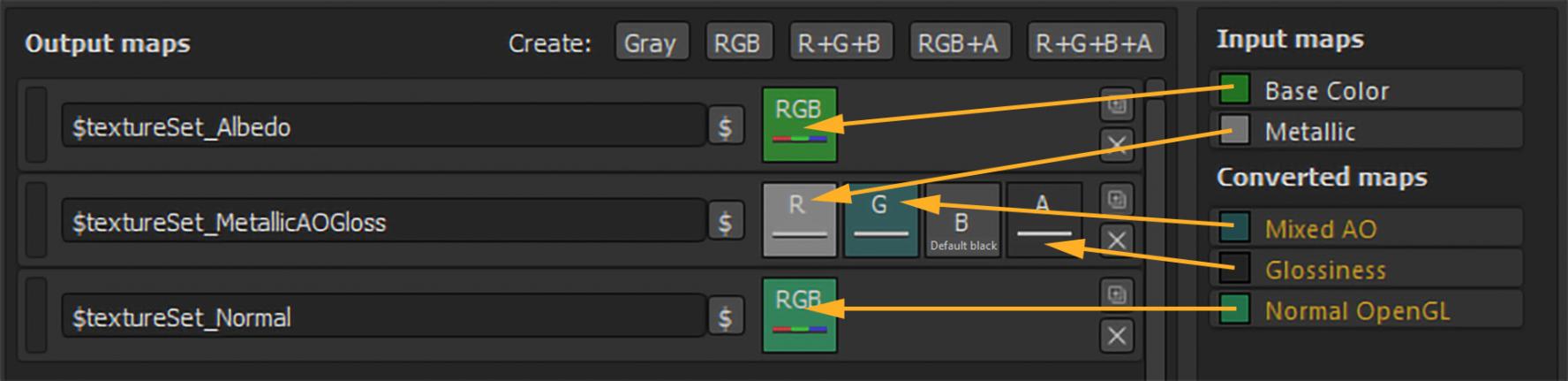

Substance Painter が Unity の標準の不透明マテリアルに使用するテクスチャを出力するための既存設定の例を以下に示します。

Unity スタンダードマテリアルのテクスチャ割り当ては以下の通りです。

| エクスポートの出力マップ | Unity スタンダードシェーダーマテリアルの割り当て |

|---|---|

| $textureSet_Albedo | アルべドに割り当て。 |

| $textureSet_MetallicAOGloss | メタリックとオクルージョンに割り当て。スムースネスソースはメタリックアルファに設定。 |

| $textureSet_Normal | 法線マップスロットに割り当て。 |

ノート: MetallicAOGloss などの単一のテクスチャに複数のチャンネルをパックすると、アンビエントオクルージョン (AO) を別のテクスチャとしてエクスポートするのに比べて、テクスチャメモリが節約されます。これは Unity の標準マテリアルを使う最善の方法です。

テクスチャを作成するときは、アルファチャンネルと混合しないことが重要です。 以下の例は、Photoshop で PNG ファイルの透明度を処理するのがいかに複雑かを示しています。なぜなら、Photoshop が PNG のアルファチャンネルを処理する方法に原因があります (外部プラグインを使用しない場合)。この場合、ソースのテクスチャファイルのサイズは問題ではないとすると、専用のアルファチャンネルを持つ非圧縮の 32 ビット TGA のほうが良いでしょう。

上記の透明な PNG ファイルは、黒の値のアルファチャンネルを使って Photoshop で作成されました。専用のアルファチャンネルを持つ TGA は、期待通りの値を示しています。上記のように、スタンダードシェーダーマテリアルに割り当てられた各テクスチャがアルファチャンネルからスムースネスデータを読み込むと、PNG テクスチャによるマテリアルのスムースネスは予期せず反転しますが、一方で、TGA テクスチャによるマテリアルのスムースネスは正常です。

法線マップの向き

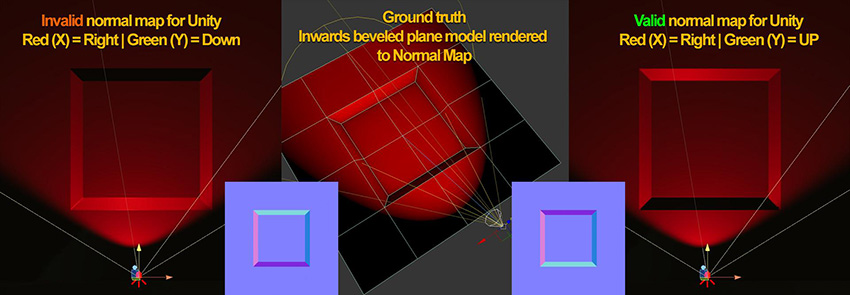

Unity は、以下のように接線空間の法線マップを読み込みます。

- Red チャンネル X+ を Right (右)

- Green チャンネル Y+ を Up (上)

たとえば、3ds Max の Render to Texture の法線マップは、デフォルトで Green Channel Y+ を Down (下) として出力します。 これが、面の方向がY 軸に沿って反転する原因になり、ライティングしても効果が表れません。法線マップ方向を検証するには、凹面ベベルを持つ単純な面 (下の例では中央の図) を作成し、それを平らな面にベイクします。 次に、指向性ライトを使ってベイクした法線マップをUnity 内の面に割り当て、軸が反転しているかどうかを確認します。

軸設定について詳細は、使用する 3D モデリングアプリケーションのドキュメントを参照してください。

- 2018–04–19 限られた 編集レビュー でパブリッシュされたページ

- 現実のようなビジュアルを作成するためのベストプラクティスガイドは Unity 2017.3で追加