Hand data model

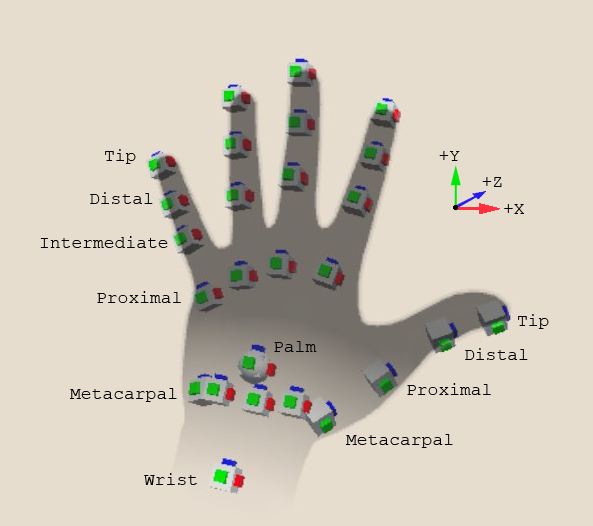

Hand tracking provides data such as position, orientation, and velocity for several points on a user's hand. The following diagram illustrates the tracked points:

Left hand showing tracked hand points

The tracked points of the hand correspond to the anatomical joints, which are labeled after the bone closest to the tip, and also the fingertips, the wrist and the palm. Note that the thumb has one fewer joint than the other fingers because thumbs do not have an intermediate phalanx.

The hand API reports tracking data relative to the real-world location chosen by the user's device as its tracking origin. Distances are provided in meters. The XR Origin in a properly configured XR scene is positioned relative to the device's tracking origin. To position model hands in the correct place in a virtual scene relative to the user's real hands, you can set the local poses of a hand model in your scene directly from the tracking data as long as the model is a child of the XR Origin's Camera Offset object in the scene hierarchy. In other situations, you can transform the hand data into Unity world space with the XR Origin's pose.

Note

Unity uses a left hand coordinate system, with the positive Z axis facing forward. This is different from the right-handed coordinate system used by OpenXR. Provider plug-in implementations are required to transform the data they provide into the Unity coordinate system.

The tracking data supplied by the hand API includes:

| Name | API | Description |

|---|---|---|

| Hand pose | XRHand.rootPose | The position and rotation of a hand. Positions are in meters and the rotation is expressed as a quaternion. |

| Joint pose | XRHandJoint.TryGetPose | The position and rotation of a joint. Note that the term "joint" should be interpreted loosely in this context. The list of joints provided by the XRHand includes the fingertips and the palm. |

| Joint radius | XRHandJoint.TryGetRadius | The distance from the center of the joint to the skin surface. |

| Joint linear velocity | XRHandJoint.TryGetLinearVelocity | A vector expressing the speed and direction of the joint in meters per second. |

| Joint angular velocity | XRHandJoint.TryGetAngularVelocity | A vector expressing a joint's rotational velocity. The direction of this vector is the axis of rotation and its magnitude is the angular velocity in meters per second. |

| Grip | XRHandDevice.gripPosition XRHandDevice.gripRotation |

The position and orientation of the center of mass of a hand in a grip pose. |

| Pinch | XRHandDevice.pinchPosition XRHandDevice.pinchRotation |

The position and orientation of the point between the thumb and the index finger when the hand is in a pinching pose. |

| Poke | XRHandDevice.pokePosition XRHandDevice.pokeRotation |

The position and orientation of the index fingertip when the hand is in a poking pose. |

| Device gestures, including pinch, menu pressed, and system. | MetaAimHand.aimFlags | Hand gestures reported through the Meta Aim Hand extension to OpenXR. |

| Aim direction and position | MetaAimHand.devicePosition MetaAimHand.deviceRotation |

A pose indicating where a device gesture is pointing, which can be used for UI and other interactions in a scene. |

The XRHand, XRHandJoint, and XRHandDevice objects are always available from a provider plug-in that supports the XRHandSubsystem. However, a provider might not support every possible joint on the hand. You can determine which joints the current device supports with the XRHandSubsystem.jointsInLayout property. Refer to Get provider data layout for more information.

A provider might not support every type of data for a hand or joint, or might not be able to provide it with every hand update event. In both cases, you can determine which data are valid in an update with the XRHandJoint.trackingState property. Refer to Check data validity for more information.

The data for the MetaAimHand is supplied by an optional OpenXR extension. Provider plug-ins are not required to support it. Use the MetaAimHand.aimFlags at runtime to determine if the data in a MetaAimHand object is valid.